Complex-valued BERT/RoBERTa model (C-BERT/C-RoBERTa). Here, the input... | Download Scientific Diagram

Transformers for Natural Language Processing: Build innovative deep neural network architectures for NLP with Python, PyTorch, TensorFlow, BERT, RoBERTa, and more : Rothman, Denis: Amazon.com.tr: Kitap

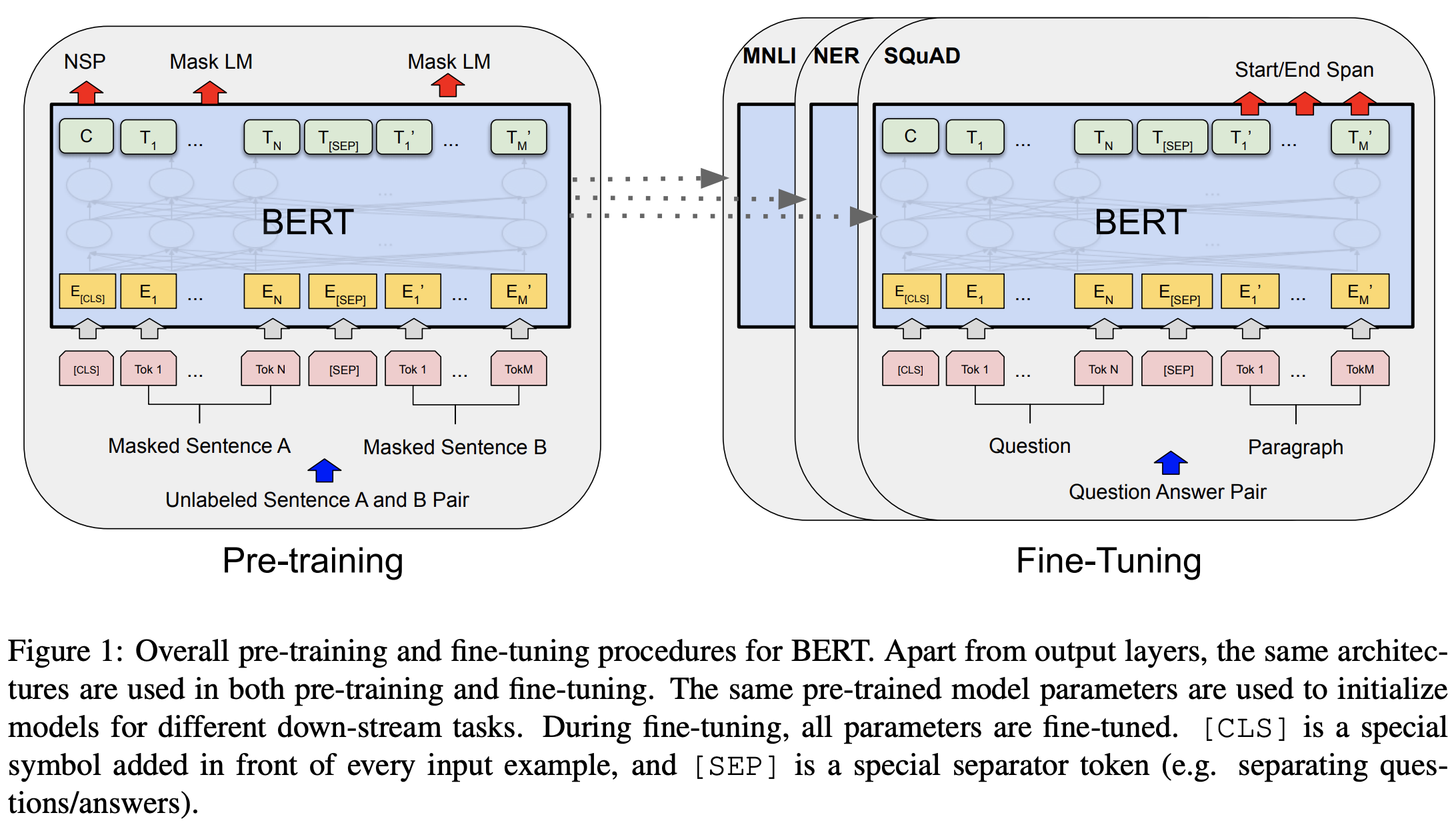

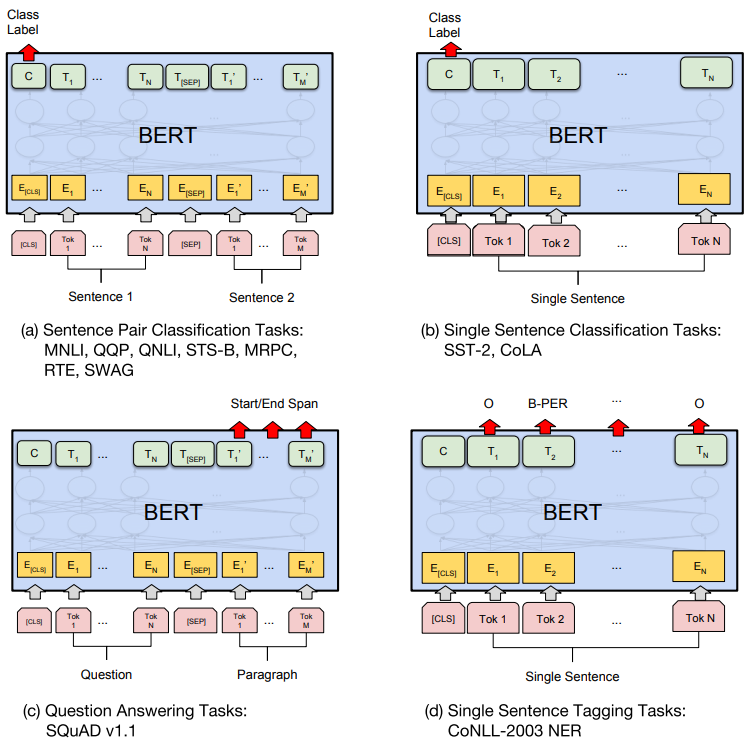

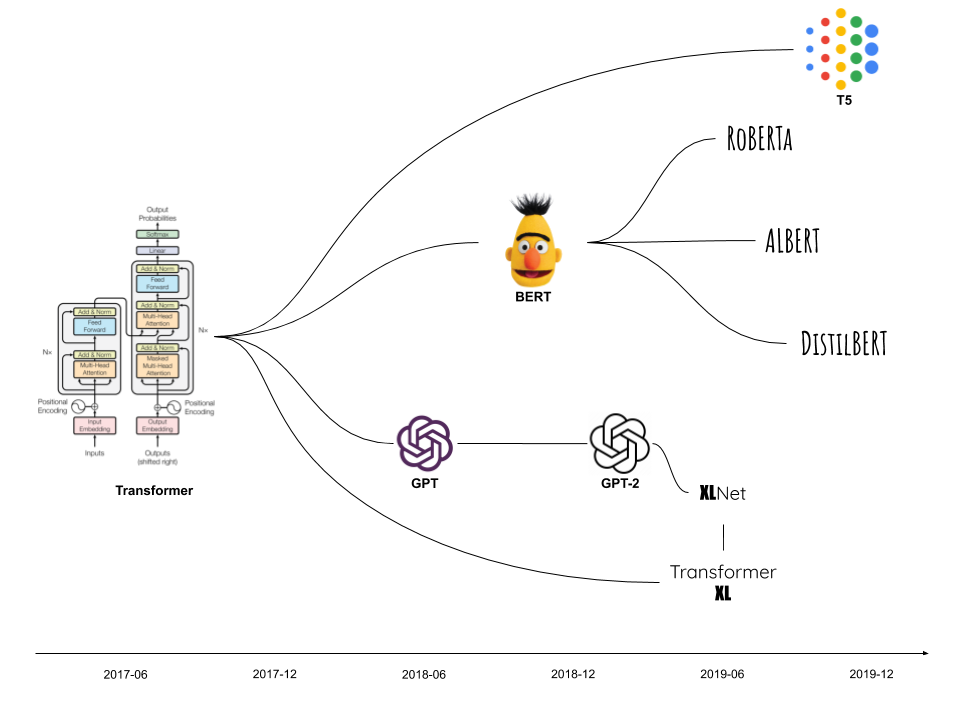

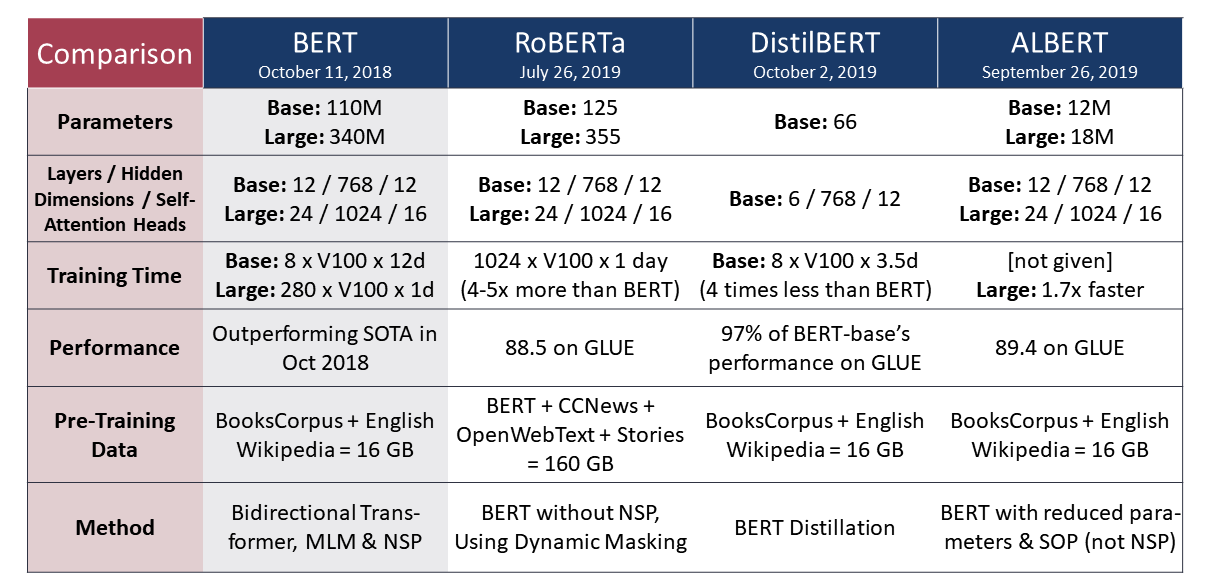

BERT, RoBERTa, DistilBERT, XLNet — which one to use? | by Suleiman Khan, Ph.D. | Towards Data Science

BERT, RoBERTa, DistilBERT, XLNet — which one to use? | by Suleiman Khan, Ph.D. | Towards Data Science

The Difference Engine on Twitter: "BERT, RoBERTa, DistilBERT, XLNet — which one to use?GoogIe's BERT and recent transformer-based methods have impacted NLP landscape, outperforming the state-of-the-art on several tasks. Lately, varying improvements

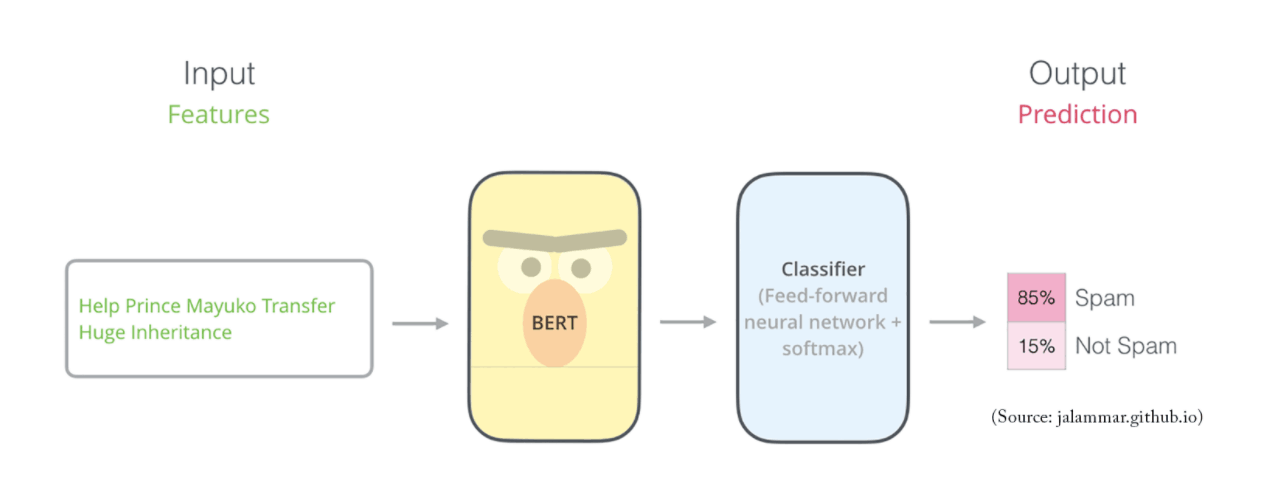

BERT, DistilBERT, RoBERta, and XLNet simplified Explanation | by Raoof Naushad | DataSeries | Medium